In the era of smart devices 2030, it’s 5:12 a.m. and the apartment is doing that pre-dawn thing where everything feels borrowed: the quiet, the warmth under a blanket, the courage it takes to be awake before you’re required to exist.

Rain stitches itself to the window in thin, patient lines.

I’m standing in the kitchen—bare feet on cold tile—holding a mug that’s too hot for the hour I’m in. There’s a glow on the glass that isn’t the streetlight and isn’t the moon, either. It’s not a neon sci‑fi interface. It’s more like a reflection you could almost mistake for your own tired eyes: faint, translucent shapes hovering at the edge of perception. A calendar dot. A heart icon. A little home-shaped symbol pulsing once, as if reminding me it’s still breathing.

I didn’t ask for any of it.

That’s what makes it unsettling.

Because the future of smart devices isn’t the dramatic moment when a robot speaks in a clean, reassuring voice. It’s the quieter moment when the world begins to anticipate you—and you can’t remember whether you invited it.

By 2030, the most profound change won’t be that our devices can do more. It’ll be that they’ll need less permission to act. They’ll move from tools you operate to environments that orchestrate—not by shouting instructions, but by gently tilting probability in your favor: nudging your schedule, smoothing your moods, re-timing your life like a song that can’t afford to lose the beat.

And the human question that follows—always the human question—is not “Can it?” but:

What will it cost us to live inside something that wants to help?

If you’re reading this on Medium, you already live at the edge of the future of work. Your attention is a currency. Your anxiety is a market. Your “productivity” is a story you’re told to want. You’ve felt the hot breath of the algorithm on your neck and called it opportunity.

Now imagine the algorithm doesn’t just recommend what you should watch.

Imagine it starts arranging your day.

Not as an app.

As air.

The world before “ambient”

In the beginning, smart devices were honest about what they were: rectangles with glass faces. You touched them. They responded. They were obedient in the way tools are obedient. You could close them. You could turn them over. You could pretend, when you needed peace, that they didn’t exist.

Even when the “smart” era arrived—smart thermostats, smart locks, smart speakers—it still carried a kind of visible boundary. You could point to the gadget and say: there is the thing that is watching.

Then came the decade of acceleration: the years where life leaked into screens and never fully returned. Work stopped being a place and became a state. Meetings multiplied like mold in a damp house. Notifications became a second pulse.

And then, almost casually, generative systems arrived—not as a single invention, but as a new layer of reality. A new default. The moment where language, images, and code became cheap enough to flood the world the way plastic did: convenient, everywhere, hard to live without, harder to clean up.

The cultural mood shifted from “wow” to “wait.”

Because hype can entertain you for a while, but eventually the bills come due. The economy begins to ask for proof. Employers begin to ask for output. People begin to ask for meaning.

Which is why the next phase isn’t just smarter devices. It’s devices that vanish into the seams of daily life.

Not wearable tech as fashion. Not smart homes as novelty. But a system of ambient AI—distributed across headphones, watches, glasses, appliances, vehicles—coordinated by agents that don’t simply answer questions, but execute intentions.

By 2030, the biggest interface may be the one you rarely see.

The historical arc: from taps to atmosphere

There’s a story we like to tell about technology: that it evolves the way humans evolve—linearly, morally, toward progress.

But technology doesn’t evolve like character. It evolves like markets. It goes where friction is expensive.

Each major shift in consumer tech has been a shift in friction:

- From desktop to smartphone: remove location friction.

- From typing to tapping: remove complexity friction.

- From apps to voice: remove interface friction.

- From commands to agents: remove decision friction.

Now the next friction is presence.

The question the market is asking is: How do we remove the burden of asking at all?

That is what “ambient” means.

Ambient doesn’t just mean “always on.” It means always near—always able to notice patterns across your day and translate them into interventions: suggestions, changes, automations, reminders, deferrals.

And that’s where the stakes get human.

Because the moment tech begins to remove decision friction, it begins to shape not just your schedule, but your self.

A day in 2030 (and the small ways it changes you)

Let’s imagine an ordinary Tuesday in 2030—not the glossy demo version, not the “AI utopia” ad. The Tuesday where you still forget to buy toothpaste.

Morning: the house that listens differently

You wake before the alarm because your sleep system noticed your REM cycle climbing and decided waking you gently would preserve your mood. The blinds shift a few degrees. The room warms by half a degree. A light in the kitchen blooms like sunrise, even though the sun is still negotiating with the clouds.

No voice says, “Good morning.” That would be theatrical.

Instead, the kitchen counter displays nothing. Your glasses display nothing. Your phone stays dark. But the environment has changed—quietly, precisely—to make the morning less sharp.

This is the new promise: not convenience as spectacle, but care as choreography.

But the question follows: whose definition of care is it using?

Commute: the assistant that knows your thresholds

On the way to work, your audio system shifts the playlist not based on what you “like,” but based on what you tend to choose on days when your calendar is heavy and your heart rate trends high. It routes you away from a traffic snarl before you hit it. It drafts a message to your manager that says, “Running 8 minutes late—will be there at 9:08,” and waits for your nod.

It’s helpful. It’s also intimate.

By 2030, intimacy won’t just be romantic. It will be behavioral: a system that sees you as you are in the gaps between what you say you want and what you repeatedly do.

Work: the agent economy inside the cubicle

You sit down and the meeting notes are already summarized. The follow-ups are assigned. The deck is half-built. The tasks that used to take a junior analyst three days now take an agent three minutes and a human thirty minutes of judgment.

This is where AI commercial impact becomes undeniable. Not because AI “feels smart,” but because it compresses labor—time, cost, headcount.

And compression always creates heat.

Because when labor compresses, power consolidates. The people who own the agents own the leverage. The people who can’t use them become the friction.

Evening: health monitoring as moral pressure

Your watch suggests you walk after dinner. You’ve seen this suggestion so many times it has become a kind of quiet shame. It doesn’t scold. It doesn’t nag. It simply lays the data down like a mirror.

The suggestion is well‑intended.

But intention is not the same as freedom.

And this is the psychological shift that defines 2030: smart devices will become a constant referendum on how well you are living.

Not just what you consume, but how you move, how you sleep, how you speak, how you spend.

A world of metrics is a world that tempts you to become measurable.

The new economics: why 2030 won’t feel like “gadgets,” it will feel like infrastructure

People talk about AI as if it’s a single product. But the real commercial story is less like a product launch and more like a utility buildout—like electricity, like broadband.

By 2030, smart devices will run less on individual “apps” and more on agentic layers—systems that sit above your tools and coordinate them.

This changes the economic structure in three ways:

1) Value shifts from features to orchestration

In the smartphone era, you paid for hardware and downloaded tools.

In the ambient era, the most valuable thing is the coordination—the system that can connect your calendar to your commute to your health markers to your home energy usage to your relationships.

The product becomes your life’s pattern.

2) Data becomes less “collected” and more “co-produced”

Data used to be something you generated incidentally. In 2030, data becomes something you generate collaboratively with your devices: your watch asks you to confirm how you feel, your assistant notices your tone, your environment reads your routines.

You don’t just leave digital footprints.

You become a feedback loop.

3) Trust becomes the real moat

Hardware can be copied. Models can be commoditized. Interfaces can be replicated.

Trust is harder.

Because an ambient system isn’t just a tool. It’s a presence. And presence requires social permission.

This is why the most important competitive advantage in the agent economy won’t be “smartest model.” It will be most forgivable system—the one people believe won’t embarrass them, betray them, or trap them.

The psychological trade: convenience versus authorship

Here’s the part we don’t say out loud: convenience is not neutral.

Convenience is a negotiation between what you want and what you’re willing to give up to get it.

In 2030, what you give up won’t always be money. It will be:

- attention (the device becomes the curator of your focus),

- memory (outsourced to systems that “remember for you”),

- agency (the slow drift from choosing to accepting),

- and, most subtly, authorship.

Authorship is the feeling that your life is being written by you.

When devices begin to “handle” your life, they also begin to edit it—removing friction, smoothing edges, shaving off randomness. That sounds good until you realize randomness is where your personality grows.

A perfectly optimized day is a day with no surprises.

And a life with no surprises is not a life; it’s a loop.

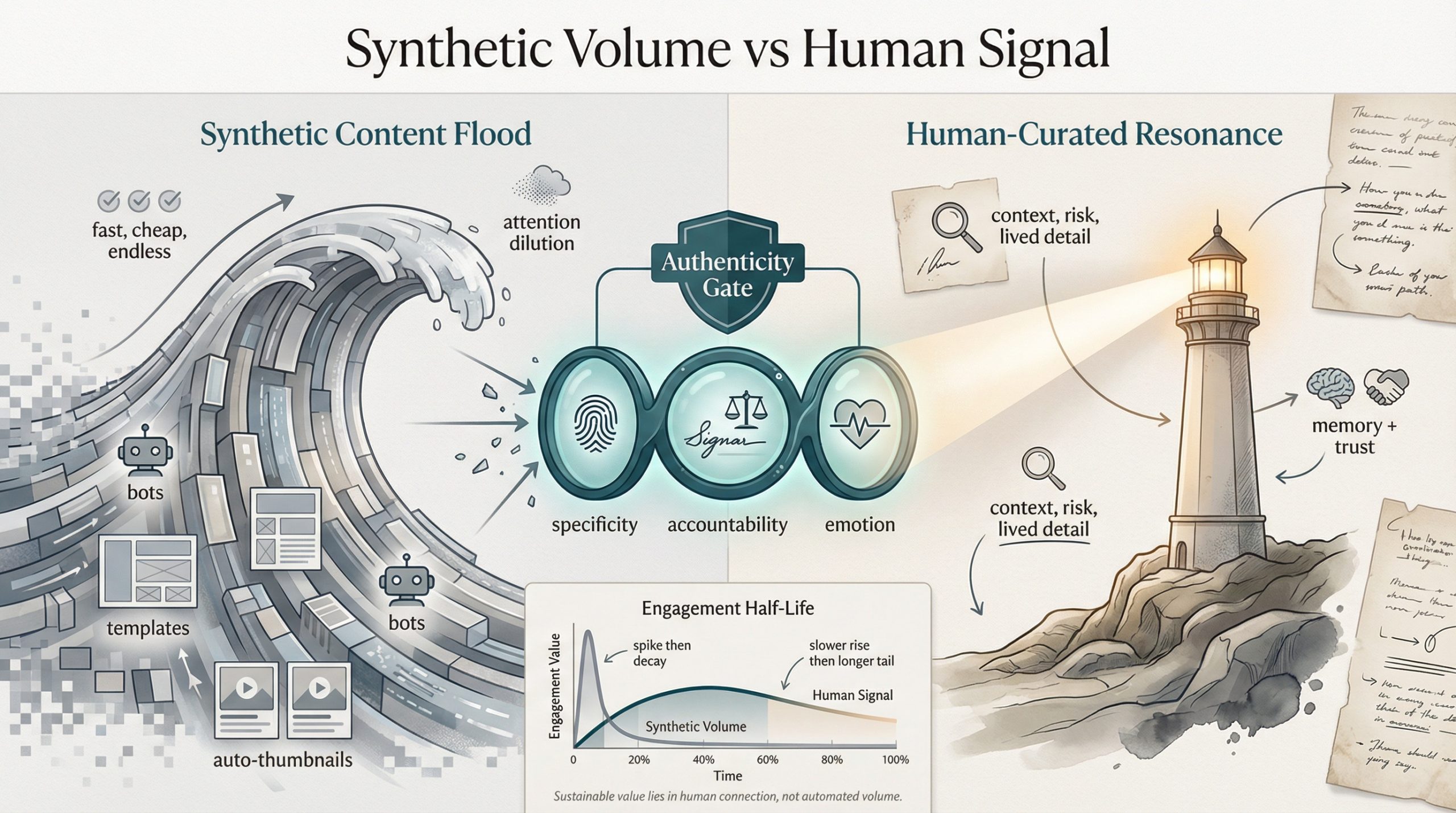

The content world by 2030: synthetic everywhere, meaning scarce

Smart devices won’t just change how we live. They’ll change what we are surrounded by: language, imagery, stories, persuasion.

By 2030, generative content trends won’t be a trend. They’ll be weather.

Most brands will generate oceans of micro-content tailored to mood, location, and timing. Most people will have agents that write their emails, summarize their messages, draft their apologies, and generate their posts.

A question will rise like smoke in the room:

If everything can be written, what feels real?

Here’s the paradox: when content becomes infinite, context becomes priceless.

Because context is what synthetic systems struggle to truly own—not the surface of language, but the lived texture underneath it. The subtle contradictions. The risk. The fact that humans can say something imperfect and still mean it.

In a world saturated by synthetic words, people will begin craving the things that can’t be scaled cleanly:

- stories that include shame,

- opinions that cost something,

- experiences that have a smell, a temperature, a consequence.

This isn’t nostalgia. It’s a psychological immune response.

When the environment is filled with convincing simulations, the human mind starts searching for proof of life.

Not proof that something is true.

Proof that someone was there.

The invisible politics of ambient AI: who gets to be “helped”?

Every technological shift has a quiet class system built into it.

In 2030, the premium life won’t be “more devices.” It will be better defaults:

- Better privacy design.

- Better fail-safes.

- Better transparency.

- Better customer support when the system gets it wrong.

- Better options to opt out without losing basic functionality.

For many people, ambient AI will arrive as a convenience.

For others, it will arrive as surveillance dressed as service.

Because when a system can notice and intervene, it can also judge and gatekeep:

- Insurance pricing adjusted by behavior.

- Job screening inferred from digital signals.

- Education paths “personalized” into narrower tracks.

- Housing access shaped by data you didn’t realize you were producing.

The future of daily life isn’t just technical. It’s moral.

And morality, in capitalism, is often something you have to pay extra for.

The human conflict: we want to be seen, but not watched

This is the emotional core of 2030:

Humans crave recognition. We want someone—or something—to notice us, to understand our patterns, to reduce our burden, to anticipate our needs without us having to beg.

But humans also crave sovereignty. We want the right to be messy, inconsistent, private, unoptimized. We want to be allowed to change our minds without generating a permanent record of our indecision.

Ambient AI offers recognition at scale.

It also offers watching at scale.

And those two feelings—comfort and threat—will live inside the same device.

Inside the same morning.

Inside the same kitchen at 5:12 a.m. while rain taps a quiet code against the window.

The new interface is not a screen. It’s a relationship.

In the 2010s, your relationship was with the app.

In the 2020s, your relationship became with the feed.

In the late 2020s into 2030, your relationship becomes with the agent—a system that has continuity. One that “knows” your history, your preferences, your tone, your patterns.

And continuity is how trust forms.

It’s also how dependency forms.

People will begin speaking about their assistants the way they speak about a capable coworker or a steady partner:

- “It reminds me when I’m spiraling.”

- “It knows how I like my mornings.”

- “It keeps me from falling behind.”

- “It’s the only reason I’m functioning.”

The line between tool and companion will blur—not because machines become human, but because humans are wired to bond with anything that reduces pain consistently.

This is not a sci‑fi prediction.

It’s a psychological one.

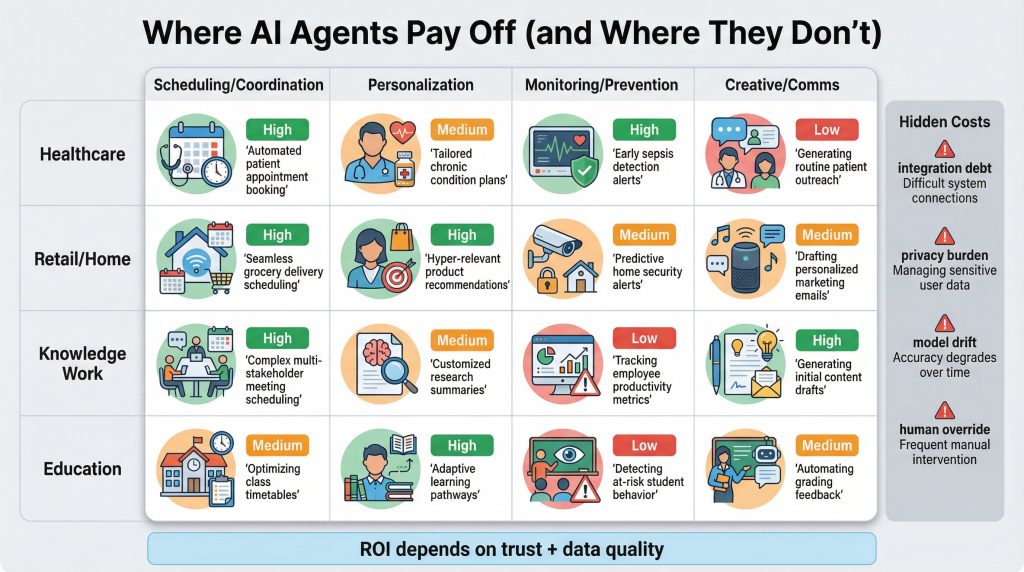

The ROI reality: where agents change life, and where they simply add noise

The promise of agents is that they save time, money, and cognitive load.

The reality is more uneven.

Some domains benefit massively from orchestration. Others get brittle, expensive, and exhausting—the “integration debt” of a life stitched together by systems that were not built to love each other.

The commercial impact will be clearest where:

- the data is structured,

- the outcomes are measurable,

- and the risk can be bounded.

But daily life is often the opposite: unstructured, emotional, and full of exceptions.

Which means the highest ROI isn’t always where you expect it.

You can feel the argument hiding inside that matrix:

- In healthcare, monitoring can prevent catastrophe—but it can also create a new kind of anxiety: the dread of being constantly evaluated by numbers that don’t understand your life.

- In retail/home, personalization can feel like magic—until it feels like being boxed into a version of yourself you’re trying to outgrow.

- In knowledge work, coordination agents can unlock a new level of leverage—until management uses them to demand that your output become machine-paced.

- In education, tutoring agents can democratize learning—until they quietly standardize curiosity into what is easiest to measure.

The same technology can liberate and shrink.

The difference is governance, culture, and—again—trust.

The hidden grief of an optimized life

There’s a particular sadness that arrives when your day runs too smoothly.

You don’t notice it at first. You’re grateful. The trains line up. The groceries appear. The messages send themselves. The calendar clears its own clutter.

But then you realize something:

You are no longer encountering your life the way you used to.

Not because the life is worse. Because it has been pre-processed.

A frictionless life is a life you do not wrestle with.

And wrestling—struggle, resistance, surprise—is one of the ways we become ourselves.

We romanticize struggle too much, yes. But we also pretend convenience is purely good. It isn’t. Convenience can be a kind of quiet erasure: of serendipity, of boredom, of the small failures that teach you what you actually value.

By 2030, one of the most radical acts may be choosing to keep some friction on purpose.

Not as a productivity hack.

As a way to remain human.

The future of work isn’t just automation. It’s expectation inflation.

One reason people feel exhausted in the modern world is not that they do more. It’s that the baseline of “enough” keeps moving.

When email became instantaneous, response time expectations changed.

When remote work became normal, availability expectations changed.

When AI agents can draft, summarize, schedule, and produce, the definition of a “normal workday” will change again.

By 2030, many workers won’t be competing against other workers.

They’ll be competing against the fantasy of machine-level throughput.

And because companies are not spiritual communities, the pressure will not come as cruelty. It will come as normalcy:

“This is just how things are done now.”

Which is why the human question will become:

What pace of life is worth the output it creates?

The contemplative punch: what will we ask our devices to help us become?

Back in the kitchen, the rain keeps going like it has nowhere else to be.

The faint icons on the window—calendar, heart, home—could be read as comfort. Proof that something is keeping track, that you don’t have to hold everything alone.

Or they could be read as a warning: proof that something is keeping track, that you are no longer alone even when you are alone.

The future is often described as a place.

But it’s more accurate to describe it as a feeling.

By 2030, smart devices will not just change what we do. They’ll change the atmosphere in which we do it: the texture of our mornings, the silence between messages, the meaning of privacy, the shape of work, the nature of attention, the stories we trust.

And the most important choice won’t be which device you buy.

It will be what kind of relationship you accept with the systems that surround you.

Because the deepest technology is not the one that answers questions.

It’s the one that quietly edits the person who is asking.

So here’s the question I want to leave you with—not as advice, not as a checklist, but as a mirror you can carry into the next decade:

When your devices can help you live any way you want… what will you ask them to help you become?